10.1 Preliminary for Symmetric Groups

Parity and Presentation of Symmetric Groups

parity

Each permutation in can be presented as a diagram permutation said to be odd (rep. even) if the number of crossing in this diagram is odd (rep. even). Define as if is even and if is odd.

Equivalently, the parity of permutation is the number of inversions in : such that .

The adjacent transposition for all , then is generated by .

Proposition

The symmetric group has the following presentation

\begin{proof}Define the LHS equals . We have a surjective homomorphism . It suffices to show . Note that the case of holds. Suppose that , and consider the subgroup generated by where by induction hypothesis. Note thatand this composition is stable by the action of . Therefore, and so .

\end{proof}Definition

Any element of can be written as product of ‘s, and for , then minimum value of is called the length of .

Cycle Types and Partitions

Definition

Any permutation can be written as product of disjoint cycles. The cycle type is the set of length of cycles. For example, the cycle type of in is .

As two permutations with the same cycle type belong to the same conjugacy class, the conjugacy classes of can be described as with and .

There are some properties of partition functions. Write partition in the multiplicity from with , then we have the following property.

Proposition

The cardinality of conjugacy class of corresponding to partition is

\begin{proof}Note that

- are same cycles and there are repetitions.

- and are same cycles and there are repetitions.

Now we finish the proof.

\end{proof}For any given , define as the number of partitions of . Then we can get its generating function.

Link to originalEuler formula

The generating function for partition is

where is the number of partitions of .

10.2 Tableaux, Tabloids and Specht Module

Definition

Let be a partition of with , then define corresponding Young subgroup as

For a trivial representation of , we have that

tableau

Let . A Young tableau of shape is an array obtained by putting into -tableau.

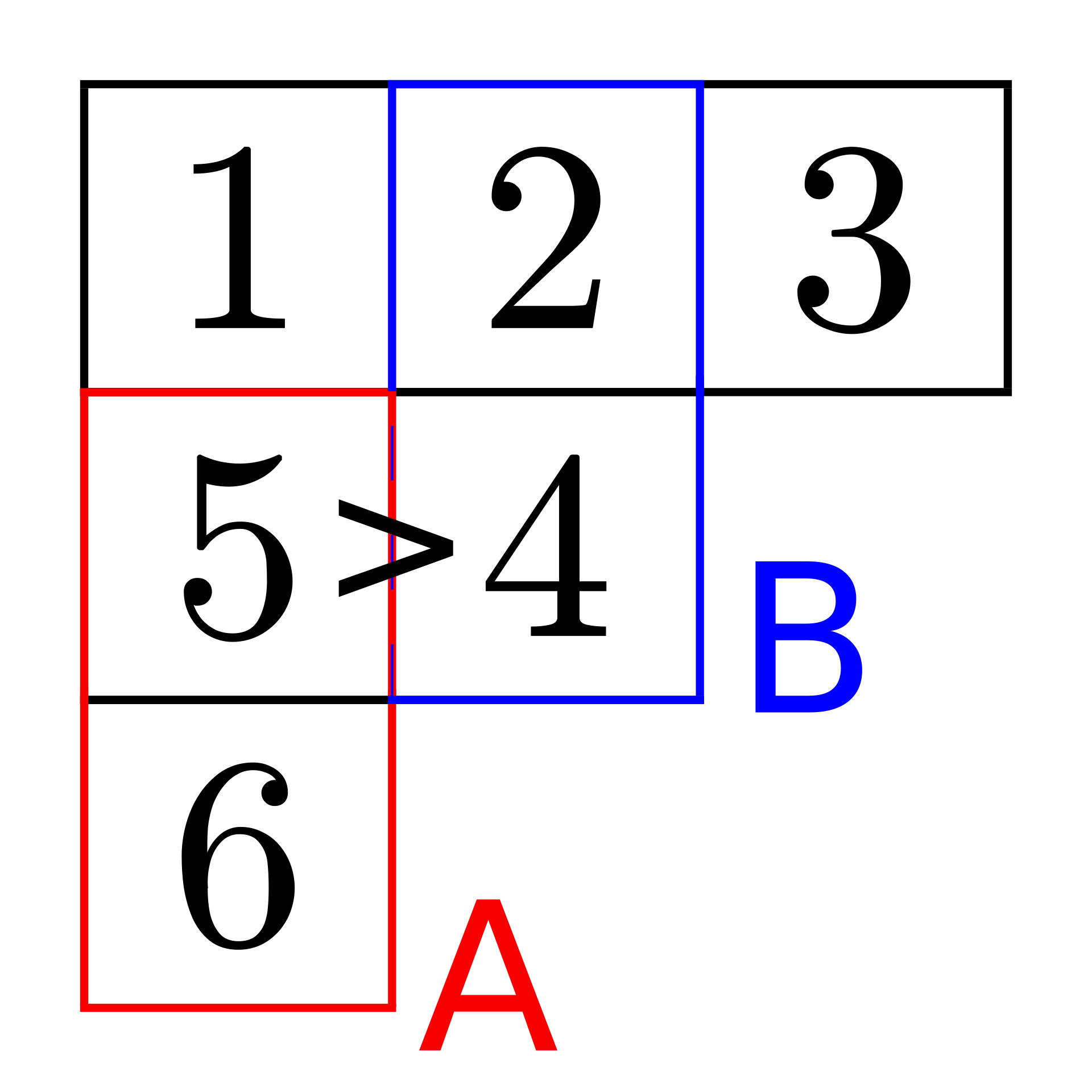

For example, see here. Note that can naturally act on -tableaus.

Definition

Two tableaux are equivalent if they are of the same -shape and they have the same element in each row. Here is an example.

tabloid

Given a tableau , define the tabloid or -tabloid as the set .

the Corresponding Module

Definition

For every partition the corresponding module is the linear space of all tabloids of shape , where and .

Examples.

- Let , then and .

- Let , then and this action is regular, as acting on .

- Let , then and this action deduces the permutation representation of .

Proposition

Let be a partition, and let be the subgroup corresponding to . Then .

\begin{proof}Define where is the trivial -module and is the tabloid whose rows are , , …, . Then is an isomorphism of -modules.\end{proof}the Row/Column Stabilizer and the Associated Polytabloid

Definition

Let be a partition, and let be a tableau in -shape. Suppose has rows and columns . Define subgroups of as and , and they are called the row-stabilizer and the column-stabilizer of .

Remark. With this definition, we have that .

associated polytabloid

For any subset , define the following elements of

where .

For any tableau with , define

where is called the associated polytabloid of -tableau .

Remark. For any -tableau , the associated polytabloid is an element of permutation module .

Example. Here is an example of .

Lemma

Let be a tableau and . Then:

- ;

- ;

- ;

- , where is the associated polytabloid of .

\begin{proof}Note that iff iff iff iff , and the proof of ii) and iii) are similar. Furthermore, .\end{proof}Specht Module

Definition

Let . The Specht module is the submodule of spanned by polytabloids where is of shape .

Remark. Since , any is a cyclic module (a module that is generated by a single element), i.e., it is generated by any tabloid .

Examples.

Link to original

- Let . Then , and . Note that is spanned by and .

- Let . Then and . It follows that and . Thus, is isomorphic to the sign representation.

- Let . Then and . It follows that and where is the tabloid whose second line is . Thus, is the standard module.

10.3 Ordering of Partitions

Definition

Let and be and . Then in lexicographic order if for some , for all and .

Definition

For any and , if for any , then we say dominates , written as .

Example. Hasse diagram for dominance order for , see here.

dominance lemma

Let and be tableaux of shape and respectively. If for each index , the elements of row of are in different columns in , then .

Link to original

\begin{proof}We will arrange the tableau to new form by permuting entries within each column. Take elements of the first row of all in different columns of and move them to the first row in . Continue to do it for the second row for each . The number of elements in the first rows of is and all elements of first rows of appears in first rows of . Thus for any , and so . As an example, see here.\end{proof}

10.4 Complete List of Irreducible Modules

Definition

For each , define an inner product as . Note that this inner produce is -invariant, i.e., .

sign lemma

Let be a subgroup of .

- If , then .

- For any , .

- If , then for some .

- If and are in the same row of , then .

\begin{proof}Easy. See here.\end{proof}Corollary

Let be tableau of shape and be tableau of shape , where and . If , then . Moreover, if and , then .

\begin{proof}Suppose and are in the same row of , then they belong to different columns of . Otherwise by ^fae9c5. Then by ^3e06ba . If and , then for some (by ^fae9c5, each pair of numbers in the same column does not appear in the same row of ). It follows that by ^fae9c5.\end{proof}Corollary

If and is tableau of shape , then is multiple of .

\begin{proof}Since is a linear combination of tabloid of shape , it follows from ^2fe718.\end{proof}submodule theorem

Let be submodule of , then either on or . In particular, is irreducible.

\begin{proof}Take , then for some . Suppose that there exists such that . But then . As is cyclic, generates and is inside of , which yields that . Otherwise, for any tableau and , we have and soTherefore, .

\end{proof}Proposition

If there is a non-zero element , then . If , then is multiplication by a scalar.

\begin{proof}Since is non-zero, there exists polytabloid such that . Moreover, as can be decomposed as , we extend to a homomorphismSince for some -tabloids , there is by ^2fe718.

If , then . Then for any permutation ,

and so is a multiplication by .

\end{proof}Theorem

The Specht modules for form a complete list of irreducible modules of .

\begin{proof}Since the number of equals the number of and so the number of conjugacy classes of , it suffices to show for any .Otherwise, suppose that with . Since is non-trivial, we have non-zero and so by ^376fda. Similarly we have and so , contradiction. Now we finish the proof.

\end{proof}Corollary

The irreducible decomposition of the permutation module has the form

where is called Kostka numbers. Moreover, .

Here is an example.

Link to original

\begin{proof}Note that where and are characters of and , respectively. If , then by ^376fda. Furthermore, since is multiplication by scalar by ^376fda, we have .\end{proof}

10.5 Basis of Specht Modules

Definition

A tableau is called standard if the rows and the columns of are increasing sequences.

In this section, we aim to prove the following theorem. The proof is written here.

Theorem

The set is a basis of Specht module .

We call a sequence with is a composition of , and is called part of . (Compare with partition: composition with .) We say that composition dominates , if for any there is .

Suppose is a tabloid of shape . For each , define as the tabloid formed by all elements in , and define as the composition which is the shape of . Here is an example:

There is a way to order tabloids in the same shape.

Definition

Let and be two tabloids in -shape with the corresponding composition and . We say that dominates , denoted by if for all .

Remark. Not all tabloids can be compared. For example, take

Note that and .

Lemma

If and occurs in a lower row than in , then .

\begin{proof}Let and be composition sequences for and , respectively. If , and suppose , is in row , of respectively, thenand so for all . Here is a illustration.

\end{proof}Corollary

If , we say that appears in if . If is a standard tableau and appears in , then .

\begin{proof}Write with .We do induction on number of “inversions” in , that is, the number of pairs such that and are in the same column but is in lower row than . Then for each inversion and thus . Here is an example.

For the last term , and are two inversions. By ^rswrnz, .

\end{proof}Now we are ready to prove ^030a4e.

\begin{proof}First we prove that they are linear independent. Suppose , and we label in such way that there is no with . Then by corollary only appears in and so . By induction all , contradiction.Next we prove that standard polytabloids of shape span . For a polytabloid with tableau , we may assume that column of are increasing by replacing with for some suitable . Then if is not standard, we will find two adjacent elements in one row with . Now we aim to find a linear combination of polytabloids where this row descent is eliminated. This algorithm uses Garnir relations, as the following example shows.

Example

Consider the following Young tableau :

There is a row descent in the second row, so we choose the subsets and as indicated.

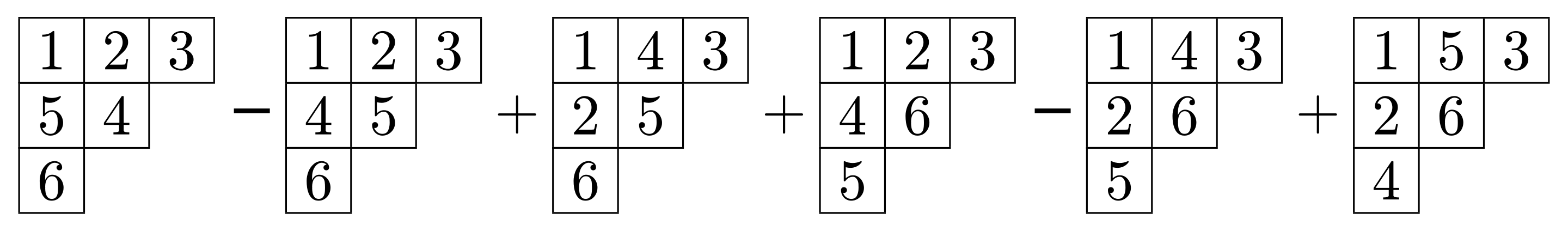

Consider all partitions of with and , that is

and they corresponds the following polytabloid

and the Garnir element . One may check and so

Therefore, the row descent in the second row is removed. One can repeatedly apply this procedure to straighten a polytabloid, eventually writing it as a linear combination of standard polytabloids.

With this example, it suffices to show .

Proposition

For a tableau with a row descent and the corresponding sets and , we have .

\begin{proof}We claim that . Since is greater than the size of column containing , for any there exist which are in the same row of . Then by ^fae9c5, yields that . Since , there is .Then consider the coset decomposition of . Note that

where . Hence, . For any element , and so . Therefore,

yields that .

\end{proof}Now we finish the proof of ^030a4e.

\end{proof}Now we get a basis of Specht module . The corresponding representation of it, is Young natural representation.

Recall that is generated by transpositions , then we denote . For be a polytabloid corresponding to standard tableau, there is

- if and are in the same column, then ;

- if and are in the same row, then will have row descent and then apply Garnir element;

- if and are in different columns and different rows, then is another polytabloid corresponding to standard tableau.

Therefore, each transposition induces a linear transformation on the subspace spanned by s polytabloids corresponding to standard tableaus. We refer to this representation as Young natural representation.

Remark. Every irreducible representation of has integer values, in particular defined over . Hence, all representations are of symmetric type.

Link to original

10.6 Frobenius Character Formula

Frobenius character formula

Let with , and let . The value of of on conjugacy class given by with equals to the coefficient of the monomial in polynomial

where .

Corollary

The dimension of Specht module is

\begin{proof}Note that , then by ^4b468aBy Vandermonde determinant,

and so the coefficient of in

is

Now we finish the proof.

\end{proof}Hook Length Formula

For a Young tableau and the element on th row, th column, define , where is the number of th column.

The hook length formula tells us that

Here is an example, and here is a proof.

Young-Fibonacci Lattice

The number of standard tableaux for is the number of path from to diagram in Young diagram. Here is an example.

Link to original

.

10.7 Branching Rule

Definition

A box of is called removable, if its removal leaves a diagram, which is denoted by .

Definition

A box of is called addable to if the union of and this box is a diagram.

Lemma

We have .

\begin{proof}Recall that basis of is polytabloids of standard tableaux. Notice every standard tableau consist of in some removable box and a standard tableau for some and then we finish the proof.\end{proof}branching rule

If is a partition, then

\begin{proof}Suppose that the removable boxes appear in rows , for each , denote by diagram when you remove box in row . For standard tableau with in th two denote by tableau obtained by removing box with . Since is a finite group, for any -modules with , we have by Maschke’s theorem.To prove the theorem, it suffices to construct chain of -modules

such that .

Define as submodule in spanned by all polytabloids corresponding to standard tableaux with being in the rows , that is, being in rows . Then we get , the chain of modules what we desire. Define

Then is -homomorphism. Since occupies a removable box, we have

and so and . It deduces that

and can be written as

Therefore, we have

Furthermore, by Frobenius reciprocity, there is

and so if and if . Note that iff , thus . Now we finish the proof.

\end{proof}Link to originalCorollary

The restriction is irreducible iff the diagram , that is, is a rectangle.

10.8 Symmetric Polynomials

Some Symmetric Polynomials: p, e and h

Definition

A polynomial in variables is called symmetric if it is stable under all permutations , that is, .

The algebra of symmetric polynomials is denoted by .

Examples. They are symmetric polynomials.

- for all , where the corresponding generated function is .

- The elementary symmetric polynomials for . Furthermore, define . Note that when , and the corresponding generated function is .

- The complete symmetric polynomials for . We also define , and the corresponding generated function is .

Since , we have . Next, notice that , then

Similarly . It deduces the Newton identity.

the Newton identity

With the definition above, we have and .

Proposition

If is an matrix, then the coefficients of the characteristic polynomial are given by the elementary symmetric functions of the eigenvalues , with alternating signs depending on the degree of each term. The power sums of the eigenvalues coincide with .

Remark. There is some connection between symmetric polynomials and the rooted solution of polynomial equations. See here. When the degree of polynomial is less than or equal to , the roots can be obtained by operating the coefficients by combination of adding, subtracting, multiplication, division, and taking roots.

Schur Polynomial

Definition

Suppose is a partition of of length at most . The Schur polynomial is defined by

For example, when , . When and , . We can prove them by computing directly, or by the following proposition.

Jacobi-Trudi formula

We have

\begin{proof}Let be a composition. PutConsider the elementary symmetric polynomial in variables with and write them to matrix

We claim that . Consider generating function for

then by there is

Take the coefficient of and we have

With , we can prove the claim. Thus, and so . For , the matrix and so . It follows that

where . Now we finish the proof.

\end{proof}We have a more direct formula for by considering a generalized tableau of shape .

Definition

We place numbers into tableau allowing repetition. Such tableau is called semistandard if rows are weakly increasing (not decreasing) while columns are strictly increasing sequences.

If is semistandard with , set

Proposition

For with length , the Schur polynomial in variables is .

Basis of

Now we consider algebra of symmetric polynomials in variables.

First define , the subspace of homogeneous symmetric polynomial of degree , and then define .

For example, when , we have , ,

Proposition

is a basis for for all with . (For a fixed , running through all partitions is a basis for .)

Then we introduce a few families of symmetric polynomials for algebra .

- For any partition , define , and . For example, when and , and , and , and .

- Let . Another family of symmetric polynomials is defined as the sum of all different monomials obtained from under permutation. For example, when and , we have , .

Theorem

Suppose that runs over all partitions of of length at most . Then each of the following families is basis of the space :

In particular, with .

\begin{proof}We first prove is a basis. Note that they are linearly independent: If and with , then and have no common monomials. Hence, if , then . Suppose , and we aim to show . We do induction on the order of the element. Take the greatest element in lexicographic order with . Then and . By induction, can be written as linear combination of .To show form a basis, define as the space of skew-symmetric polynomials in . We say is skew-symmetric if . It is easy to see that any skew-symmetric polynomials is divisible by . We have that , where defines an isomorphism between and . One can check that basis of can be given by

where runs over through partitions of of length . Notice that and . Furthermore, as , it deduces that form a basis. Moreover, recall that ^i3a31d and similarly we have , and it yields that .

To show form a basis, suppose that with and , and then

For , we can do it by ^jrsjoc, as it deduces that .

\end{proof}Definition

Then is a formal series in infinitely many variables, and there are several examples:

For more details of projective limit, see page 489 of Algebra chapter 0 - 2009 - Aluffi.pdf. The sequence of Schur polynomials defines Schur functions, and the sequence of defines the monomial symmetric functions.

Lemma

We have

where , and .

\begin{proof}Recall thatLink to originalP(t)=d/dt\ln(H(t))=\frac{H'(t)}{H(t)}.It follows

and it deduces what we desire. Similarly we can prove .

\end{proof}orthogonal properties

For two families of variables and , we have the following properties

\begin{proof}By ^dbe3dc, we havewhere is the length of .

Note that . Use for and set , we have

Equip with the following form , and it deduces that and . With respect to this inner product, is orthonormal basis of .

Link to original\end{proof}

10.9 Characteristical Map

For a finite group , its irreducible characters form basis for the space of class functions . Define and with .

We introduce on the product. For any and , where (rep. ) is a character of (rep. ), define as

Theorem

The product on is commutative and associative.

\begin{proof}Define . Then there is a bijectionFor associativity, use .

\end{proof}Furthermore, we can equip the algebra with an inner product

Definition

Define the character map

Remark. For any and any conjugacy class with representative , we can write the character map as

Theorem

The characteristic map is an isometry and an algebra isomorphism. Moreover, and , where is the character of permutation module .

\begin{proof}A Generalization

Let be a finite group, and let be an associative algebra. If are functions, we may define a bilinear form with values in

If is a class function on and is a class function on , the generalized Frobenius reciprocity still holds, that is

where and with the convention that if .

For any , there is

Also note that .

Since for and , we have

and so is a homomorphism.

Let be the trivial representation of , then

Recall that the permutation module with . Then yields that

To prove that , note that

and . Now we finish the proof, as is induced .

\end{proof}Corollary

The character table is the transition matrix between bases and of .

\begin{proof}It suffices to show . By ^492b55 we haveand so . It deduces that

Now we finish the proof.

\end{proof}Frobenius character formula

If with , then for any the value is the coefficient of in the monomial

where for .

Remark. It has been proved in ^4b468a.

\begin{proof}Recall that andLink to original\mathscr s_\lambda=\frac{\left| \begin{matrix} x_1^{\lambda_1+m-1} & \cdots & x_m^{\lambda_1+m-1} \\ x_1^{\lambda_2+m-2} & \cdots & x_m^{\lambda_2+m-2} \\ \vdots & & \vdots \\ x_1^{\lambda_m} & \cdots & x_m^{\lambda_m} \end{matrix} \right|}{\left| \begin{matrix} x_1^{m-1} & \cdots & x_m^{m-1} \\ x_1^{m-2} & \cdots & x_m^{m-2} \\ \vdots & & \vdots \\ 1 & \cdots & 1 \end{matrix} \right|}.By ^7ed3d1, we have and it deduces that

Therefore, the coefficient of with of right hand side is .

Link to original\end{proof}